BLOG

NREL launches geothermal storage project to address data center cooling energy consumption levels

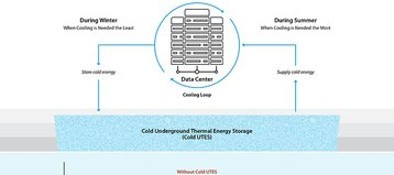

The National Renewable Energy Laboratory (NREL), a federally funded research center, has launched a new project to address the increasing energy consumption of data center cooling.

The project, funded by the US Department of Energy Geothermal Technologies Office, will incorporate geothermal underground thermal energy storage (UTES) technology at data center sites nationwide.

According to the NREL, traditional cooling systems - that cool servers through cold air or liquid cooling - account for as much as 40 percent of data center annual energy consumption.

In comparison, geothermal technologies such as the Cold Underground Thermal Energy Storage (Cold UTES) project use off-peak power to create underground cold energy reserves, which can be incorporated into existing data center cooling systems and used during grid peak load hours, reducing energy consumption.

The project's main aims are to reduce strain on the grid from data centers, reduce the energy cost to data centers, and reduce the cost of data center cooling systems. The project ultimately aims to illustrate how Cold UTES can be a commercially and technically viable solution for large data center cooling loads.

Supermicro Offers Next-Generation Air-Cooled and Liquid-Cooled Architecture for NVIDIA Blackwell Platform

SAN JOSE, Calif., Feb. 5, 2025 /PRNewswire/ -- Supermicro, Inc. (NASDAQ: SMCI), a Total IT Solution Provider for AI/ML, HPC, Cloud, Storage, and 5G/Edge, is announcing full production availability of its end-to-end AI data center Building Block Solutions® accelerated by the NVIDIA Blackwell platform.

The Supermicro Building Block portfolio provides the core infrastructure elements necessary to scale Blackwell solutions with exceptional time to deployment. The portfolio includes a broad range of air-cooled and liquid-cooled systems with multiple CPU options.

Supermicro's NVIDIA HGX B200 8-GPU systems utilize next-generation liquid-cooling and air-cooling technology. The newly developed cold plates and the new 250kW coolant distribution unit (CDU) more than double the cooling capacity of the previous generation in the same 4U form factor.

The new air-cooled 10U NVIDIA HGX B200 system features a redesigned chassis with expanded thermal headroom to accommodate eight 1000W TDP Blackwell GPUs. Up to 4 of the new 10U air-cooled systems can be installed and fully integrated in a rack, the same density as the previous generation, while providing up to 15x inference and 3x training performance.

The new SuperCluster designs incorporate NVIDIA Quantum-2 InfiniBand or NVIDIA Spectrum-X Ethernet networking in a centralized rack, enabling a non-blocking, 256-GPU scalable unit in five racks or an extended 768-GPU scalable unit in nine racks.

Blackstone defends data center investment vision, but admits it's watching DeepSeek concerns "very closely"

Blackstone took a defensive stance in its latest earnings call after China's DeepSeek caused tech stocks to tumble.

After previously using the call to push the benefits of AI infrastructure, the subject was not broached until Morgan Stanley asked about DeepSeek-instigated data center buildout concerns.

The Chinese AI company's more efficient model has led many to question the rationale of building ever-larger data centers to train ever-larger models.

Blackstone COO Jon Gray said that DeepSeek was part of a broader trend, where "the cost of compute is coming down pretty dramatically. But at the same time, that's going to lead to more usage to more adoption.“

Blackstone, through QTS and other investments, plans to continue to "go out and spend the big dollars to build these things," but only "based on the demand signals from our tenants.“

Liquid cooling expert John Gross joins Prometheus Hyperscale as CTO

Prometheus Hyperscale has appointed liquid cooling expert John Gross as CTO.

Gross has been working with the firm, which intends to build a 1GW data center campus in Wyoming, for the last four years as lead engineering consultant.

After beginning his career in data center commissioning at Compaq Computer, Gross has worked as a principal mechanical engineer with clients including ExxonMobil, HP, and Valero Energy.

He serves as secretary of the American Society of Heating, Refrigerating and Air-Conditioning Engineers (ASHRAE) TC9.9, chairs the Liquid Cooling Subcommitteefor ASHRAE SSPC 127, and is a former co-lead for the Open Compute Project’s Advanced Cooling Facilities subproject.

Formerly known as Wyoming Hyperscale, Prometheus Hyperscale is building a data center campus on 58 acres of land on Aspen Mountain, a remote site southeast of Evanston in Wyoming. The plan is the brainchild of Thornock, whose family owns the land, and, in September, the company brought Trevor Neilson on board as president and rebranded as Prometheus.

The company says the Aspen Mountain facility will be "the most advanced sustainable data center in the United States" once up and running, and has already pledged to utilize liquid cooling, with waste heat put to use on a nearby farm. In May, Prometheus agreed to a deal to buy 100MW of energy from small nuclear reactor startup Oklo.

Intel posts third consecutive quarterly loss, cancels planned release of Falcon Shores AI chip

Intel is canceling its Falcon Shores AI accelerator in an effort to concentrate its resources after the company posted a third consecutive quarter of revenue decline.

In the first earnings report since former CEO Pat Gelsinger left the company, Intel’s fourth-quarter revenue was down seven percent year-on-year (YoY) to $14.3 billion, whilst full-year revenue declined by two percent YoY to $53.1bn.

Intel also saw its Data Center and AI (DCAI) quarterly revenue decline three percent YoY to $3.4bn however, yearly revenue for the segment reached $12.8bn, a one percent increase from 2023. Intel Foundry suffered a similar story, with Q4 2024 revenue down 13 percent YoY to $4.5bn and yearly revenue down seven percent to $17.5bn.

The Falcon Shores GPU is a hybrid processor designed to support AI and HPC applications and increase performance and performance-per-watt efficiency. Described by Intel as its first multi-chiplet offering, it combines the x86 and Xe GPU hardware on a single Xeon socket chip.

Following Holthaus’ comments, her co-CEO and current Intel CFO David Zinsner gave an update on Intel foundry, telling analysts that the ramp-up of Intel 18A in the second half of 2025 “will support increased volumes and improved profitability in 2026.”

Zinsner also revealed that Intel had received $1.1bn of CHIPS Act funding from the US government in Q4 FY24 and an additional $1.1bn in January of Q1 FY25.

France and UAE to invest billions into 1GW European AI data center

France and the United Arab Emirates are set to spend €30-50 billion ($31-52bn) on a 1GW AI data center and other AI investments.

A location for the AI campus has yet to be chosen, but it will be built somewhere in France. Alongside the data center, the two companies expect to invest in chips, talent development, and the establishment of virtual data embassies that will lead to sovereign AI and cloud infrastructures in both countries.

A location for the AI campus has yet to be chosen, but it will be built somewhere in France. Alongside the data center, the two companies expect to invest in chips, talent development, and the establishment of virtual data embassies that will lead to sovereign AI and cloud infrastructures in both countries.

A location for the AI campus has yet to be chosen, but it will be built somewhere in France. Alongside the data center, the two companies expect to invest in chips, talent development, and the establishment of virtual data embassies that will lead to sovereign AI and cloud infrastructures in both countries.

Equinix launches data center in Paris, France

Equinix has officially launched a new data center in Paris, France.

The €350 million ($361.4m) facility, located at 9 Avenue du Marechal Juin, totals 78,910 sq ft (7,330 sqm) of colocation space across 12 data halls. The 20,745 sqm (223,297 sq ft) building offers 28.8MW in total.

A redeveloped brownfield site, PA13x will be equipped with photovoltaic panels, covering approximately 350 sqm (3,767 sq ft). A sound-dampening roof and acoustic isolation walls will reduce noise.

Some 14MW was pre-leased at PA13x back in 2022. According to Equinix earnings presentations, the first phase of the facility actually quietly launched back in Q4 2023. A second 14MW phase, announced in Q2 2024, was due to officially launch in Q2 2025 – 7MW of which is leased.

Across Paris, Equinix operates ten data centers – three xScale and seven International Business Exchange (IBX) facilities – totaling approximately 665,000 sq ft (61,000 sqm) of floor space.

Equinix partnered with Singapore’s GIC sovereign wealth fund in October 2019 to develop hyperscale facilities under the xScale label and has since partnered with the likes of PGIM and Canada Pension Plan to deploy more than a dozen hyperscale facilities globally.

Google expects 2025 capex to surge to $75bn on AI data center buildout

Google expects capital expenditure to hit $75 billion this year, with the majority going to data centers, servers, and networking.

That is more than Wall Street expected at $58bn, and significantly above 2024's $52.5bn capex spend.

While Google Cloud's revenue reached almost $12 billion for the quarter, up 30 percent, it came in below analyst expectations compiled by LSEG. They had expected a 32.2 percent increase to $12.16bn.

Google Cloud's operating income was $2.1bn, and the operating margin increased from 9.4 percent to 17.5 percent year over year.

As in Microsoft's earnings call, analysts raised the topic of Chinese AI company DeepSeek's cheaply-trained model which caused tech shares to slump earlier this year and brought into question the massive amounts of investment that have gone into AI.

This year also saw the launch of Stargate, OpenAI's data center venture, which pledged to spend $100bn 'immediately.’

Meta’s Mesa, Arizona, data center comes online

Meta has brought its data center in Mesa, Arizona online.

The Mesa data center was originally planned to come online in 2023, but was likely delayed as part of a broader Meta building pause as it redesigned facilities for artificial intelligence (AI).

At the time, Meta said that the facility would span more than 2.5 million square feet (232,300 sq m) across five buildings. Meta told DCD that two of the buildings are currently online.

Around 20,000 tons of steel were erected, including structural steel and joists, and 375,000 cubic yards of concrete were poured. The company said 78 percent of construction waste was diverted to recycling centers, keeping almost 28,474 tons out of landfills.

Meta plans to spend some $60-65 billion on data centers and broader capex this year, and is developing a 2GW facility in Louisiana. This week, CEO Mark Zuckerberg said that the company plans to spend "hundreds of billions of dollars" on AI infrastructure over the long term.

TikTok to invest $3.8bn in Thai data hosting service

TikTok Pte. Ltd, the Singaporean unit of China's ByteDance, is set to invest $3.8 billion in "data hosting services" in Thailand.

The Thai Board of Investment announced that it had approved TikTok's Thai digital infrastructure project on January 29, at the same time approving a 3.25 billion baht ($96.4m) project by Siam AI Corporation for AI cloud services.

TikTok will be providing data hosting services to "support the activities of affiliated companies," with operations slated to begin in 2026.

It has not been specified if the data hosting service will be offered from TikTok-developed and owned data centers, or if the company intends to lease out space in other data centers for the service. However, in October 2024, reports emerged that TikTok's parent company ByteDance was looking at opening a Thailand data center to support cloud and AI services.

In September 2024, Google said it would invest $1 billion in data centers in capital city Bangkok and the nearby coastal province of Chonburi, creating 14,000 jobs in the process.

As part of the project, Google will work with Gulf Edge to create a sovereign cloud offering in Thailand.

AWS launched a cloud region in Thailand earlier this month as part of a plan to invest $5 billion by 2037, while Microsoft also plans to build a data center region in the country.

CoreWeave brings Nvidia GB200 NVL72 instances to its cloud platform

CoreWeave is now offering Nvidia GB200 NVL72 instances on its cloud platform.

The instances are generally available via CoreWeave's Kubernetes Service, Slurm oN Kubernetes (SUNK), and Mission Control platform.

The GB200 NVL72-based instances on CoreWeave connect 36 Nvidia Grace CPUs and 72 Nvidia Blackwell GPUs in a liquid-cooled, rack-scale design and are available as bare-metal instances through CoreWeave Kubernetes Service, and are scalable up to 110,000 GPUs.

CoreWeave claims that it is the first cloud provider to make Nvidia GB200 NVL72 instances generally available.

The company displayed a demonstration of a GB200 NVL72 system at one of its data centers in November of last year. The cluster delivered up to 1.4 exaFLOPS of AI compute.

In January 2025, it was reported that IBM would be using CoreWeave's cloud platform to access the Nvidia GB200 clusters, featuring the GB200 NVL72 systems for training its next generation of Granite AI models.

Leave A Reply

LOGO

This stunning beach house property is a true oasis, nestled in a serene coastal community with direct access to the beach.

Opening Hours

Monday - Friday : 9AM to 5PM

Sunday: Closed

Closed during holidays

Contact

+18888888888

hezuo@eyingbao.com123 West Street, Melbourne Victoria 3000 Australia