BLOG

Vertiv™ Liquid Cooling Services support and enhance the entire lifecycle of liquid cooled systems

Columbus, Ohio [February 11, 2025] – Vertiv (NYSE: VRT), a global provider of critical digital infrastructure and continuity solutions, today announced the launch of Vertiv™ Liquid Cooling Services.

This offering provides customers with the tools to enhance system availability, improve efficiency, and navigate the evolving challenges of advanced liquid cooling systems with confidence. The offering is now globally available.

AI workloads continue to reshape the data center landscape, driving a significant increase in data center rack densities, with 30 kW racks now becoming the standard and some reaching up to 120 kW or higher. Operators are facing increased heat loads, higher power densities, and the need for liquid cooling solutions to maintain operational continuity is in high demand.

Vertiv Liquid Cooling Services offering is focused on providing seamless integration of liquid cooling systems with IT equipment and adjacent infrastructure.

Vertiv™ Liquid Cooling Services include a full range of solutions designed to support AI-driven and high-performance computing environments, providing seamless integration, long-term reliability, and operational continuity.

Overwatch, Nautilus partner on modular AI data center roll out

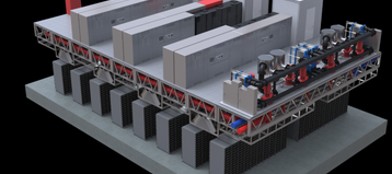

US sustainable investment firm Overwatch Capital has partnered with Nautilus Data Technologies to deploy modular, AI-ready data centers powered primarily by clean energy microgrids across the US market.

The partnership will leverage Overwatch’s modular data center development and clean energy integration with Nautilus’ EcoCore infrastructure, incorporating advanced thermal management and liquid cooling technologies.

Overwatch and Nautilus plan to deliver prefabricated modular data centers that integrate critical mechanical, electrical, and plumbing systems within a single scalable framework. According to the companies, the approach will enable rapid deployment and multi-gigawatt capacity expansion in key US markets.

Nautilus’ EcoCore infrastructure is a modular data center design with 2.5MW capacity. The company claims it enables efficient cooling of high-density GPU clusters without relying on traditional water consumption methods, reducing environmental impact while maintaining performance reliability.

In March last year, Nautilus launched the EcoCore product line and announced a "multi-megawatt" data center agreement with Start Campus. Through EcoCore, Nautilus is expanding beyond colocation and becoming a supplier to other data center operators.

HPE announces shipment of its first NVIDIA Grace Blackwell system

FEBRUARY 13, 2025 – Hewlett Packard Enterprise (NYSE:HPE) announced today that it has shipped its first NVIDIA Blackwell family-based solution, the NVIDIA GB200 NVL72.

This rack-scale system by HPE is designed to help service providers and large enterprises quickly deploy very large, complex AI clusters with advanced, direct liquid cooling solutions to optimize efficiency and performance.

The NVIDIA GB200 NVL72 features shared-memory, low-latency architecture with the latest GPU technology designed for extremely large AI models of over a trillion parameters, in one memory space. GB200 NVL72 offers seamless integration of NVIDIA CPUs, GPUs, compute and switch trays, networking, and software, bringing together extreme performance to address heavily parallelizable workloads, like generative AI (GenAI) model training and inferencing, along with NVIDIA software applications.

With escalating power requirements and data center density dynamics, HPE has five decades of liquid cooling expertise that uniquely positions the company to help customers bring fast deployment and an extensive infrastructure support system for complex liquid-cooled environments.

YouTube creates the world's first water block for NVIDIA RTX 5090 FE

Famous for German enthusiast Der8auer has created a liquid cooling system for the NVIDIA RTX 5090 Founders Edition. The task is complicated by the design of the new video card.

The RTX 5090 FE electronics consists of three printed circuit boards and uses liquid metal as a thermal interface, technical differences make it difficult to create or modify liquid cooling systems for it. The Der8auer prototype managed to reduce the temperature of the GPU from 73.8°C to 43.8°C.

The water block is made entirely of copper, including the cooling plate and outer cover. It was designed as a proof of concept and is minimalist yet neatly designed. The transparent glass cover allows you to see the liquid flowing past the plate. The cooling element itself is made of 14mm copper and has multiple passes to cool the entire large GB202 chip.

The liquid block occupies two slots and has the same length and height as standard cooler RTX 5090 FE.

Supermicro posts strong AI earnings, but warns of Blackwell supply challenges

Supermicro has said it expects net sales for Q2 2025 to be in the range of $5.6-5.7 billion, despite the company still failing to deliver its delayed annual report.

President and CEO Charles Liang said Supermicro’s financial team and its new auditor, BDO, have been “fully engaged in completing the auditor process,” and he is “confident” the report will be filed with the SEC by the February 25 deadline.

Supermicro said it expects revenues to range between $23.5bn to $25bn for FY25, down on the company’s previous forecast of between $26-30bn. For FY26, the company expects to see $40bn in revenue – something Liang described on the call as a “relatively conservative estimation” – above analysts' expectations for the period of $30bn.

The company said its growth was driven by demand for air-cooled and DLC (direct liquid cooling) rack-scale AI GPU platforms, with AI-related platforms contributing to more than 70 percent of the company’s revenue in Q2 across both enterprise and cloud service provider markets.

On the production front, Liang told analysts that Supermicro’s US campuses now boast 20MW of power, enabling them to produce more than 1,500 DLC GPUs per month.

This includes “expanding and enhancing” the company’s total liquid-cooled data center infrastructure solutions featuring the latest DLC technology, something that Liang said was exemplified by the xAI Colossus, the world's largest liquid-cooled AI supercomputer.

Musk's $97bn bid for OpenAI complicates fundraising and Stargate plans

Elon Musk has offered to buy the non-profit part of OpenAI for $97.4 billion.

The pitch, which Musk says is backed by a group of investors, comes as OpenAI is trying to shift to being for-profit, raise tens of billions, and spend them on the data center initiative Stargate.

The generative AI business is currently technically a nonprofit with a for-profit subsidiary, but OpenAI is planning to convert to a public benefit corporation that is for-profit, with a non-profit division. Altman is expected to receive equity as a result.

At the same time, OpenAI is in the midst of trying to raise $30-40 billion at a valuation of $300-340 billion. Much of that money would then be ploughed into Stargate, the $500 billion joint venture to develop AI data centers in the US.

OpenAI is currently expected to invest $19 billion in Stargate, but requires this funding round to close to be able to have enough cash to do so.

The owner of rival xAI donated less than $45 million to the non-profit when it was formed (however, he claimed it was $100 million), and said "It does seem weird that something can be a nonprofit, open-source and somehow transform itself into a for-profit, closed source." Musk himself tried to make OpenAI for-profit, as part of Tesla.

Altman added: "I think his whole life is from a position of insecurity, I feel for the guy. I don't think he's a happy person.“

AirTrunk announces second Malaysian data center in Johor

Data center operator AirTrunk has announced plans to build a second Malaysian data center in Iskandar Puteri, Johor.

The JHB2 facility will be scalable to more than 270MW and will bring the company’s total investment in Malaysia to RM 9.7 billion ($2.2bn).

JHB2 will use liquid cooling technology and be designed to meet a PUE of 1.25, with multiple renewable energy options available to customers.

This follows the launch of AirTrunk’s JHB1 facility in July 2024, after initially announcing its Malaysian expansion in early 2023. JHB1 offers 21,900 sqm (235,750 sq ft) of space across 20 data halls and more than 150MW of capacity.

AirTrunk recently announced onsite solar deployments at its JHB1 facility and signed a vPPA for a data center for 30MW of renewable energy under Malaysia’s Corporate Green Power Programme.

Johor has become a popular market for data center operators, with the likes of Keppel, Princeton Digital, STT GDC, Yondr, and Equinix having a presence in the region.

G42 and DataOne to establish AI data center in France

G42 is working with DataOne to establish an AI data center - powered by AMD hardware - in France.

Core42, a subsidiary of the Abu Dhabi-based AI and cloud business, will install its infrastructure at DataOne’s data center in Grenoble, south-eastern France.

Kiril Evtimov, group CTO of G42 and CEO of Core42, said, "France is taking bold strides in AI innovation, and G42 is proud to contribute to this effort. By deploying AMD GPUs, we are not only strengthening Europe’s AI infrastructure but also enabling enterprises and researchers to accelerate innovation at scale.

DataOne was established last year by infrastructure and connectivity provider BSO. With the backing of the Ardian debt fund, the company is expanding two Tier III-quality campuses in France - the Grenoble site and a data center at Villefontaine, Lyon. Currently, these data centers offer a combined 15MW of IT load, but DataOne intends to expand this to 400MW by 2028.

The data center will run on an unspecified number of AMD GPUs from the company’s Instinct range of AI chips.

The G42 announcement came as part of an international AI summit being hosted in France this week, and follows a commitment from Brookfield to spend €20 billion ($20.7bn) on French AI infrastructure over the next five years.

UK government pledges 500MW of data center power for its AI Growth Zones

The UK government says it will work with power companies to make up to 500MW of electricity available for new data center developments in each of its planned AI growth zones.

They aim to create areas with favorable conditions for building AI infrastructure, with streamlined planning rules to enable developments to be approved quickly, and a sufficient supply of power.

Technology secretary Peter Kyle said the AI growth zones will “deliver untold opportunities – sparking new jobs, fresh investment, and ensuring every corner of the country has a real stake in our AI-powered future.”

The first AI Growth Zone will be built in Culham, Oxfordshire – home to the UK’s Atomic Energy Authority. AWS and CloudHQ already operate data centers near Culham.

Since taking office in July, the UK government has signaled its intention to attract more data center investment to the country. To help do this, it has designated data centers as critical national infrastructure and pledged to reform planning laws to make it easier to build new facilities on greenbelt land.

Musk's xAI considering second data center, $5bn Dell server deal

AI startup xAI is considering building a second data center, after setting up a large cluster in Memphis, Tennessee, The Information reports.

At the same time, Bloomberg reports that Elon Musk's company is in advanced talks with Dell Technologies to buy $5 billion in AI servers.

The Nvidia GB200 compute tray contains two Nvidia Grace CPUs and four Blackwell GPUs. The Memphis 'Colossus' supercomputer was built using both Dell and Supermicro servers, with a reported reported 100,000 Nvidia GPUs.

Musk in October said that he planned to increase the site to 200,000 GPUs, and then in December changed it to one million planned GPUs. To help fund the developments, xAI is said to be in talks to raise $10bn in a round that would value xAI at $75bn.

Alongside the buildout and fundraise, Musk is seeking to disrupt rival OpenAI's plans to go for-profit, key to funding its $500bn Stargate effort.

Meta in talks to acquire AI chip startup Furiosa AI

Meta is reportedly in talks to acquire South Korean AI chip startup FuriosaAI.

Founded in 2017 and headquartered in South Korean capital Seoul, FuriosaAI has raised approximately $115 million across four funding rounds to support the development of its RNGD chip. The AI inference chip has a thermal design power (TDP) of 150W, but the company claims that when compared to Nvidia’s H100 GPUs – which has a TDP of 350W – RNGD offers a 3x better performance per watt.

RNGD is planned to enter mass production in the second half of this year.

It has long been known that Meta has been looking to develop its own chips in order to reduce its reliance on Nvidia hardware. In February 2024, according to documents seen and reported on by Reuters, the company was planning to deploy the second generation of Meta Training and Inference Accelerator (MTIA) chip.

Meta was originally expected to roll out its in-house chips in 2022 but scrapped the plan after they failed to meet internal targets, with the shift from CPUs to GPUs for AI training forcing the company to redesign its data centers and cancel multiple projects.

Meta is not the only company looking to reduce its reliance on Nvidia by developing its own chips. Earlier this week, it was reported that OpenAI is finalizing the design for its first custom AI training chip, which will be fabricated by TSMC and made using the chipmaker’s 3nm technology.

Leave A Reply

LOGO

This stunning beach house property is a true oasis, nestled in a serene coastal community with direct access to the beach.

Opening Hours

Monday - Friday : 9AM to 5PM

Sunday: Closed

Closed during holidays

Contact

+18888888888

hezuo@eyingbao.com123 West Street, Melbourne Victoria 3000 Australia